-

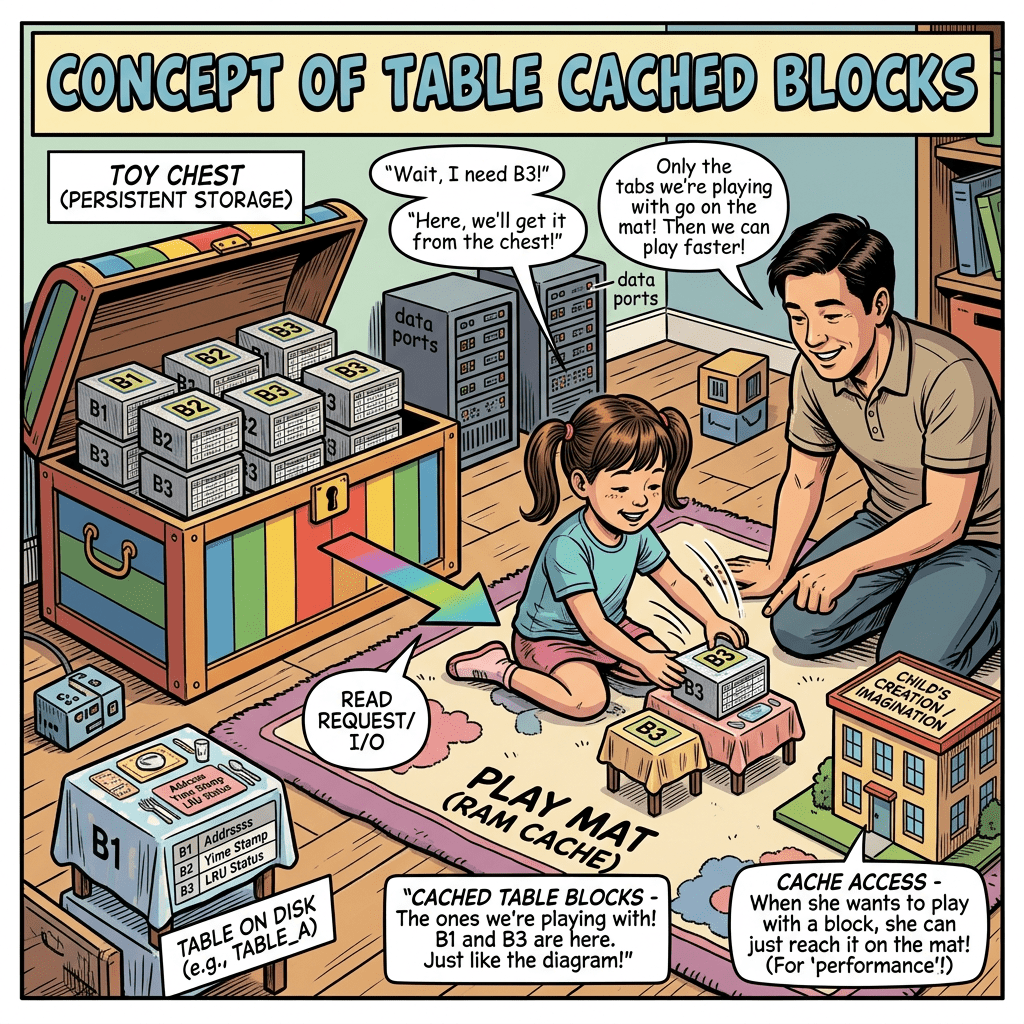

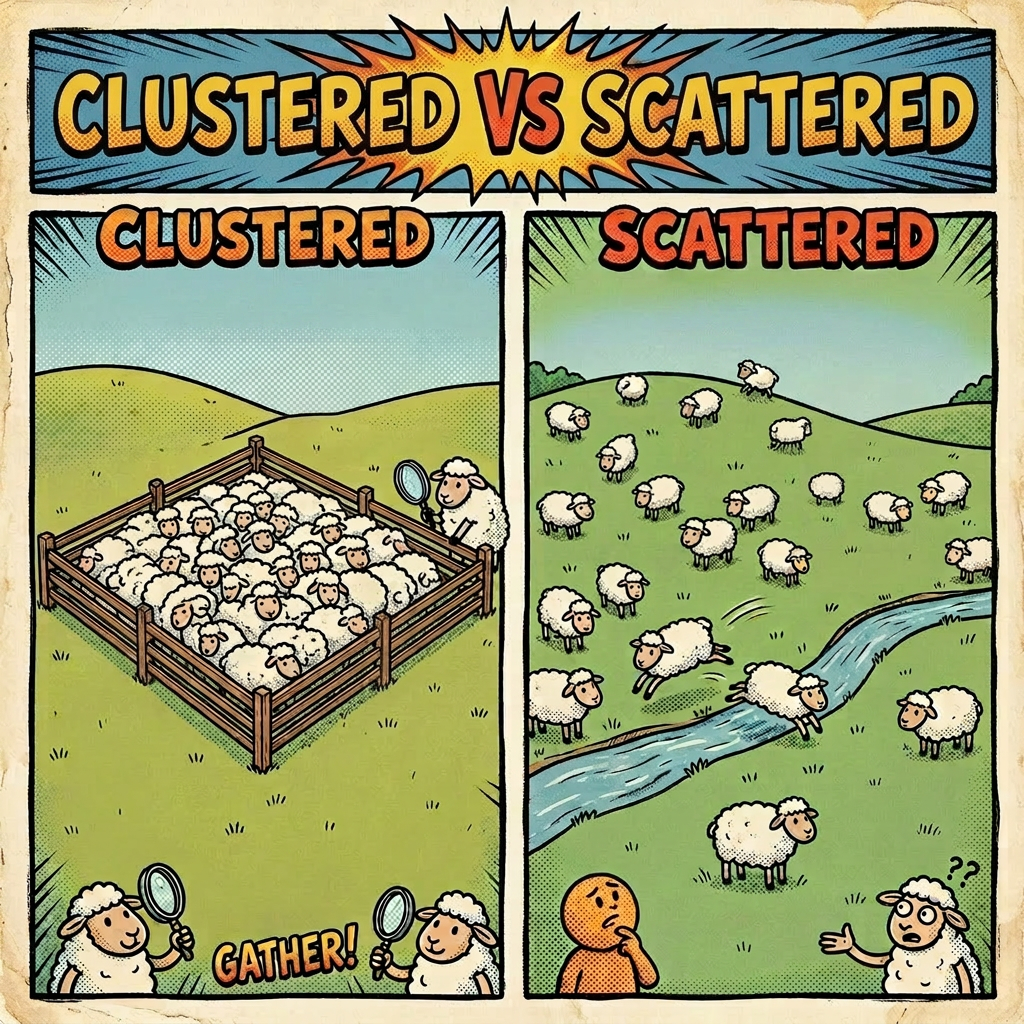

Continue reading →: Performance: Clustering Data

Continue reading →: Performance: Clustering DataIn my previous post I spoke about using TABLE_CACHED_BLOCKS to make the CLUSTERING_FACTOR for indexes more realistic by having Oracle assume there was more than 1 block from the table in the buffer cache, helping the optimizer select indexes more appropriately. But a way to seriously improve performance is to…

-

Continue reading →: Oracle Transparent Hugepages

Continue reading →: Oracle Transparent HugepagesI have only just found out (thanks Sascha) that Oracle has changed its stance on transparent hugepages, depending upon which linux kernel you are using. If you are using UEK7 (basically, Oracle Linux 8U7+ and OL9), you should be setting it to madvise instead of never. Oracle19 documentation about Transparent…

-

Continue reading →: Oracle Database: Global Stats Changes After Partition Truncate

Continue reading →: Oracle Database: Global Stats Changes After Partition TruncateI may be late to the party on this one, but it certainly surprised me during a database migration from Oracle 11G to Oracle 19.26 recently (yes, there’s a surprising amount of databases still on 11G – released in 12 years ago, and 10G, and even 9i, 8i, 8.0 and…

-

Continue reading →: ORA-04021 timeout occurred while waiting to lock object during stats gather

Continue reading →: ORA-04021 timeout occurred while waiting to lock object during stats gatherI recently came across an interesting change in behaviour when gathering stats. Patch 32781163 (Deadlock on library cache lock on MV refresh and dbms_stats gathering the same object) was applied to an Oracle 19C system, and as well as curing the deadlock problem the client was experiencing it also changed…

-

Continue reading →: Infinity – Old Oracle Numbers

Continue reading →: Infinity – Old Oracle NumbersA long time ago, Oracle had a couple of special numbers that you were allowed to store in a NUMBER datatype. They were Infinity and Negative Infinity! If you selected these numbers (using SQL*Plus) , they showed up as “~” and “-~“, although it is worth noting that if you’re…

-

Continue reading →: Unlocking Insights: Why Conferences Matter

Continue reading →: Unlocking Insights: Why Conferences MatterI very much enjoy speaking at conferences, and talking to everyone there. The insights that you get into how other companies are running their systems is incredible. The innovative approaches that different people and companies have to solving the same problems never ceases to amaze me, and the only way…

-

Continue reading →: Oracle Data Migration Validation

Continue reading →: Oracle Data Migration ValidationData migration is common. Moving data from one database to another, either via Datapump, Goldengate, unload/load scripts or some other method has risk. You are going to have to check. Frequently, I see the rows being counted. Any nothing else. If we have 10 rows in the source, and 10…

-

Continue reading →: Oracle Statistics Gathering Timeout

Continue reading →: Oracle Statistics Gathering TimeoutJanuary 2024 – Oracle 12C, 19C, 21C, 23C+ Gathering object statistic in Oracle is important. The optimizer needs metadata about the object, such as the amount of rows and number of distinct values in a column, to help it decide the optimum way to access your data. This takes effort,…

-

Continue reading →: Fundamental Security Part Eight – Unified Audit

Continue reading →: Fundamental Security Part Eight – Unified AuditJanuary 2024 – Oracle 19C+ If you are Oracle 12.1 onwards, and you have not explicitly disabled it, you are using Unified Audit We previously discussed Data Encryption At Rest. Now lets talk about Unified Audit, and why you should be using it When you create an Oracle database, there…

-

Continue reading →: Fundamental Security Part Seven– Data Encryption At Rest

Continue reading →: Fundamental Security Part Seven– Data Encryption At RestOctober 2023 – Oracle 19C+ What is Data Encryption At Rest and why should we use it? We have looked at Data Encryption in Transit, encrypting your network traffic. Everyone should be doing this. But what about Encrypting Data At Rest? Data that is stored permanently (on your hard drives).…