I was in a discussion at an Oracle Meetup this week, and the subject of RAC in a virtualized environment – specifically Oracle Virtual Machine (OVM) – came up.

Here’s a couple of points which were discussed.

pingtarget

There was a lack of awareness of a common problem, which has a solution built-in to Oracle 12.1.0.2 Grid Infrastructure and later. In a virtualized environment, the network components are also virtualized. Sometimes, network failures on the host may not be raised up to the guests. As a result, the guest O/S commands can fail to detect the network failures and the Virtual NIC remains up. Grid Infrastructure (GI) will not perform a VIP fail-over as it can’t see the failure despite the network being unavailable.

To resolve this, Oracle has added an option of a “pingtarget” for each public network defined in GI. This will perform a keep-alive to a external device, usually something like the default gateway. This is just like the heartbeat on the cluster interconnect.

Before

srvctl config network Network 1 exists Subnet IPv4: 192.168.0.160/255.255.255.224/eth1, static Subnet IPv6: Ping Targets: Network is enabled Network is individually enabled on nodes: Network is individually disabled on nodes:

The default gateway makes a good ping target. For this IP and subnet, it’s 192.168.0.161

srvctl modify network -k 1 -pingtarget 192.168.0.161

After

srvctl config network Network 1 exists Subnet IPv4: 192.168.0.160/255.255.255.224/eth1, static Subnet IPv6: Ping Targets: 192.168.0.161 Network is enabled Network is individually enabled on nodes: Network is individually disabled on nodes:

All safe!

Server Pools

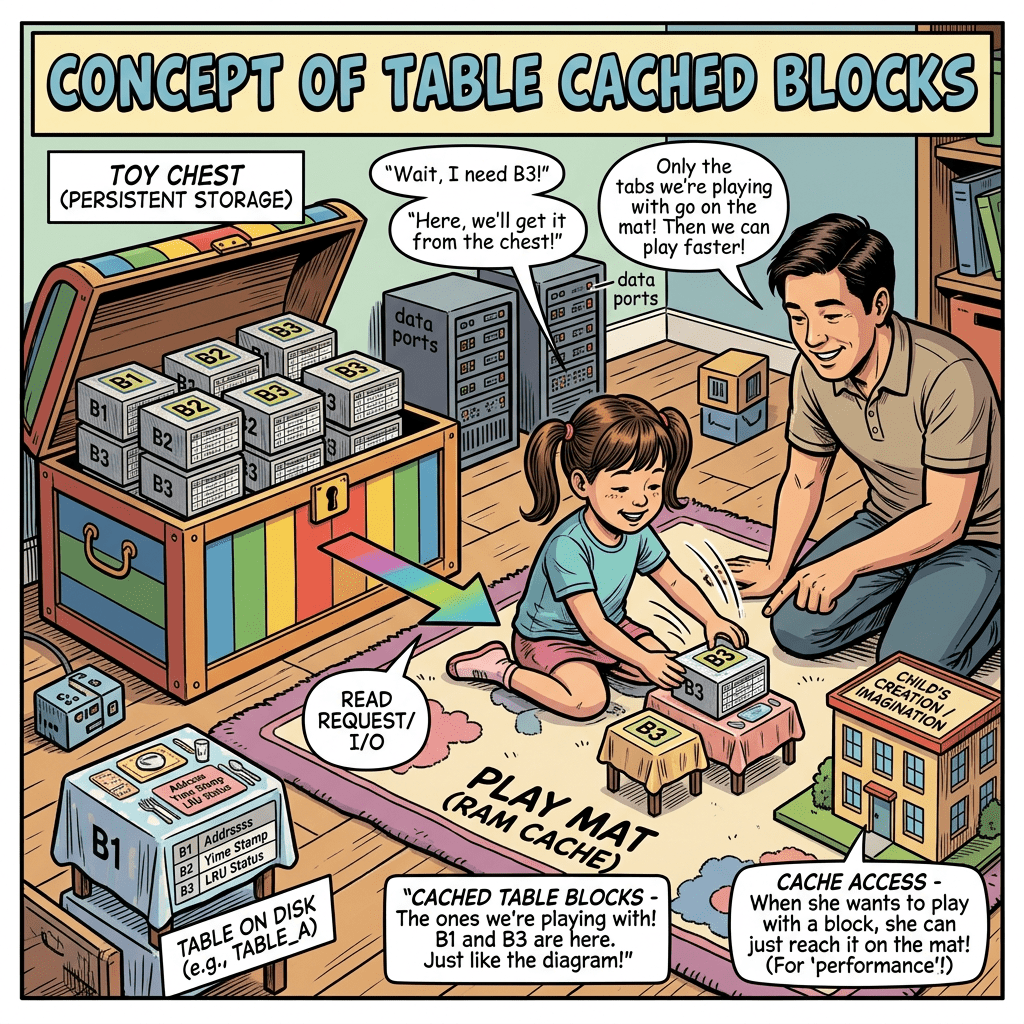

A second item we discussed was the Server Pools in OVM. Each RAC guest should be on a different host, otherwise you have not eliminated that as a Single Point Of Failure. A second less obvious SPOF is the Server Pool disk.

A Server Pool is a filesystem LUN (and IP address prior to release 3.4) used to group a logical collection of servers with similar CPU models, within which we can create and migrate VM guests. For a RAC installation, each RAC node should be within a different server pool, as well as on different physical hardware.

In this image, RAC nodes within the same cluster should be created within each server pool. This configuration can safely support a 2 node cluster despite having 4 servers, with one node created in “OVS-Pool-2” on server “ovs02“. The second node should be in “OVS-Pool-1″ and can be on “ovs01“, “ovs11” or “ovs12“.

It is possible to live migrate guests between these 3 servers.

Leave a comment