I have only just found out (thanks Sascha) that Oracle has changed its stance on transparent hugepages, depending upon which linux kernel you are using.

If you are using UEK7 (basically, Oracle Linux 8U7+ and OL9), you should be setting it to madvise instead of never.

Oracle19 documentation about Transparent Hugepages

pre UEK7$ cat /sys/kernel/mm/transparent_hugepage/enabledalways madvise [never]post UEK7$ cat /sys/kernel/mm/transparent_hugepage/enabledalways [madvise] never

What are Hugepages

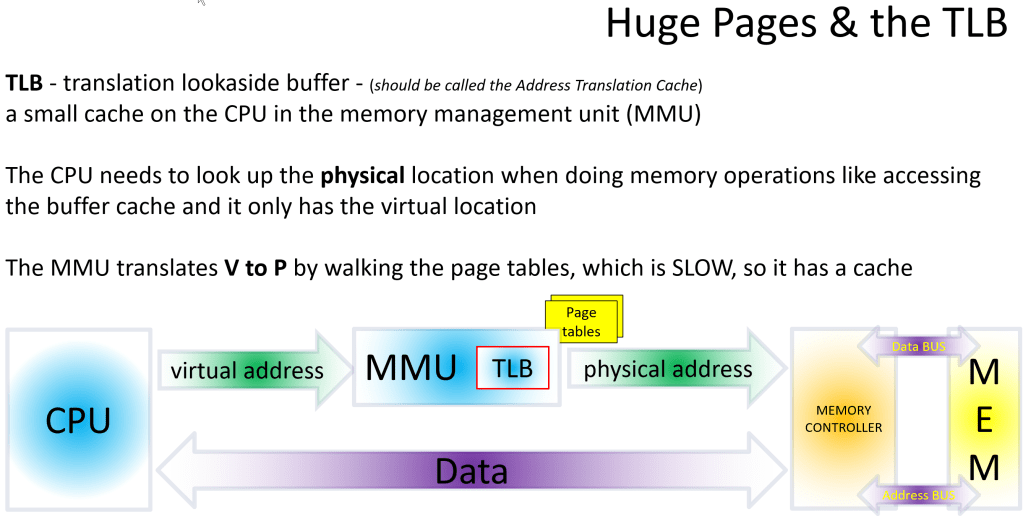

If you are running on Linux, you should ALWAYS configure Hugepages to hold your SGA (in /etc/sysctl.conf : vm.nr_hugepages=). This reserves the pages in memory in 2MB blocks instead of 4KB block, which allows for a much smaller Translation Lookaside Buffer (TLB), which saves memory and increases performance (even on bare metal, but much more with virtualisation). The TLB is a small on-chip cache in the Memory Management Unit (MMU) which caches the translation of the virtual (o/s) memory address to a physical memory address (on the RAM) so it can be accessed without having to page walk the Page Tables (up to 5 levels down) to find the physical memory location.

IMPORTANT! Regardless of the setting of transparent_hugepages, you still need to manually configure the Hugepages for the SGA.

So why the change?

First and foremost, madvise works exactly the same as never if the program calling the memory routines is not aware of the madvise routines. It’s safe to set for Oracle 19, for example, which I understand (but have not checked) will not take advantage of the madvise setting as it’s not coded to do so. Oracle 26ai does appear to have some madvise calls in the code.

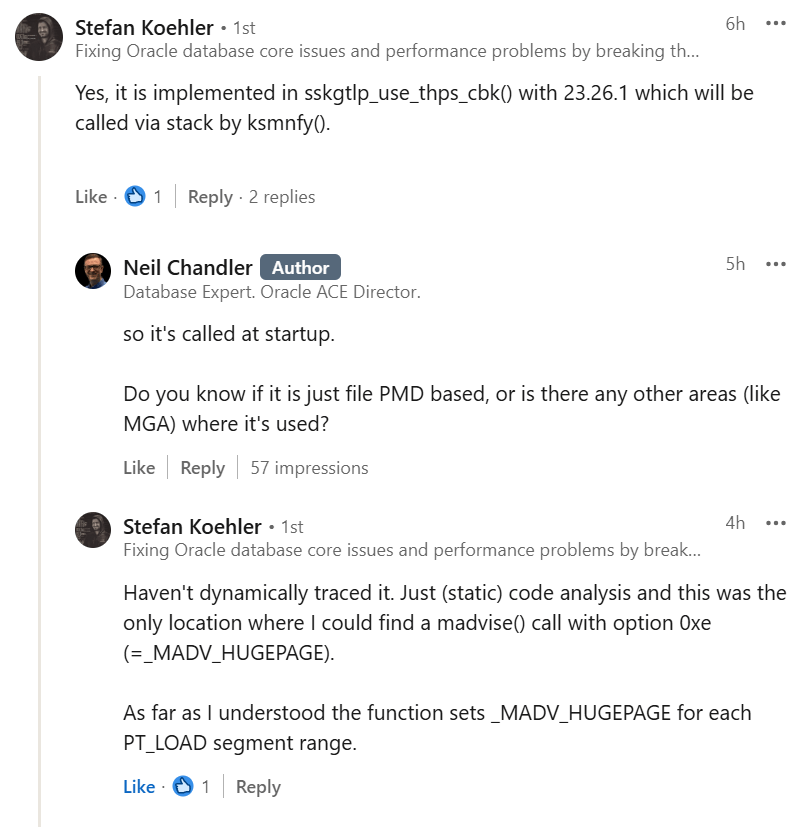

Update info 2026-04-23 from the Stefan Koehler which suggests but doesn’t prove that my observational analysis is correct

DO NOT set transparent_hugepages=always (note: this is the default) as Transparent Hugepages can cause memory allocation delays during runtime (i.e. whilst it coalesces small 4K pages into a big enough lump to allocate the hugepages.)

What difference does setting madvise make?

Now that’s the question! I’ve done some preliminary testing, but the short answer is, maybe a little.

The longer answer: On Linux 9 with Oracle 26ai, I have done a little testing.

You can see how many transparent hugepages have been allocated using

$ cat /proc/meminfo | grep ^AnonAnonPages: 581720 kBAnonHugePages: 0 kB

To this point, I have not been able to detect any AnonHugePages being allocated with madvise. However, I have noticed a difference with FilePmdMapped Hugepages. To do this you need to read /proc/<pid>/smaps and add up all of the FilePmdMapped entries. I wrote a bit of code to do this for all Oracle processes

# thpp.sh transparent huge pages per (oracle) process# grab AnonHugePages and FilePmdMapped from /proc/$pid/smaps# sum it up for each process# note: querying /proc/$pid/smaps is expensive. do not do this too much...date "+%Y.%m.%d %H:%M:%S" | tee -a thpp.outecho "." | tee -a thpp.outecho "=== THP /sys/kernel/mm/transparent_hugepage/enabled ===" | tee -a thpp.outcat /sys/kernel/mm/transparent_hugepage/enabled | tee -a thpp.outecho "." | tee -a thpp.outecho "=== THP /sys/kernel/mm/transparent_hugepage/defrag ===" | tee -a thpp.outcat /sys/kernel/mm/transparent_hugepage/defrag | tee -a thpp.outecho "." | tee -a thpp.outecho "=== THP usage by Oracle processes ===" | tee -a thpp.out# get a list os PIDs for Oracle or ora_ processesfor pid in $(pgrep -f "oracle|ora_"); do anon=$(grep AnonHugePages /proc/$pid/smaps 2>/dev/null | awk '{sum+=$2} END {print sum}') if [ "${anon:-0}" -gt 0 ]; then # get the command name cmd=$(ps -p $pid -o cmd=) echo "PID $pid ($cmd): $((anon/1024)) MB in THP" | tee -a thpp.out fidoneecho "." | tee -a thpp.outecho "=== THP FILE usage by Oracle processes from smaps ===" | tee -a thpp.outfor pid in $(pgrep -f "oracle|ora_"); do filepmd=$(grep FilePmdMapped /proc/$pid/smaps 2>/dev/null | awk '{sum+=$2} END {print sum}') rssmem=$(grep "^Rss" /proc/$pid/smaps 2>/dev/null | awk '{sum+=$2} END {print sum}') pssmem=$(grep "^Pss" /proc/$pid/smaps 2>/dev/null | awk '{sum+=$2} END {print sum}') if [ "${rssmem:-0}" -gt 0 ]; then # get the command name cmd=$(ps -p $pid -o cmd=) echo "PID $pid ($cmd): $((filepmd/1024)) MB file THP : ($((rssmem/1024)) MB Rss : $((pssmem/1024)) MB Pss)" | tee -a thpp.out fidone

This gives the following output (trimmed for readability)

(the Rss is the amount of memory being used by the process, but as this is misleading I also give Pss, which is that value divided by the number of processes sharing the memory). There are only 3 user processes. Whilst I have not included the Oracle background processes in the output, we see the same pattern with those too.

[never]: small amount of file THP

2026.04.20 22:44:53.=== THP /sys/kernel/mm/transparent_hugepage/enabled ===always madvise [never].=== THP /sys/kernel/mm/transparent_hugepage/defrag ===always defer defer+madvise madvise [never].=== THP usage by Oracle processes ===.=== THP FILE usage by Oracle processes from smaps ===[snip]PID 3636 (oraclecdb1 (LOCAL=NO)): 16 MB file THP : (147 MB Rss : 30 MB Pss)PID 3672 (oraclecdb1 (LOCAL=NO)): 6 MB file THP : (113 MB Rss : 17 MB Pss)PID 3698 (oraclecdb1 (LOCAL=NO)): 2 MB file THP : (104 MB Rss : 15 MB Pss)

[madvise]: much larger file THP

2026.04.20 23:53:48.=== THP /sys/kernel/mm/transparent_hugepage/enabled ===always [madvise] never.=== THP /sys/kernel/mm/transparent_hugepage/defrag ===always defer defer+madvise [madvise] never.=== THP usage by Oracle processes ===.=== THP FILE usage by Oracle processes from smaps ===[snip]PID 5678 (oraclecdb1 (LOCAL=NO)): 164 MB file THP : (228 MB Rss : 33 MB Pss)PID 5704 (oraclecdb1 (LOCAL=NO)): 150 MB file THP : (205 MB Rss : 23 MB Pss)PID 5730 (oraclecdb1 (LOCAL=NO)): 138 MB file THP : (192 MB Rss : 18 MB Pss)

This is NOT definitive. It’s just background measurement of the FilePmdMapped but madvise would seem to be having a positive effect on File PMD Mapped THP’s.

Recommendation

Follow Oracle’s recommendation and use madvise for UEK7 and later 🙂

Ensure the defrag is also set to madvise where relevant.

Additional:

For a list of OEL versions and their related kernel releases, check here

Please be aware, if you use the Oracle preinstall rpm’s (e.g. oracle-ai-database-preinstall-26ai-1.0-1.el9.x86_64), they will change the transparent_hugepages setting in grub for you.

If you are running both Oracle 19C and Oracle 26ai on the same servers, the last preinstall rpm used determines the setting of this parameter… be careful!

Leave a comment